Valentine

by on

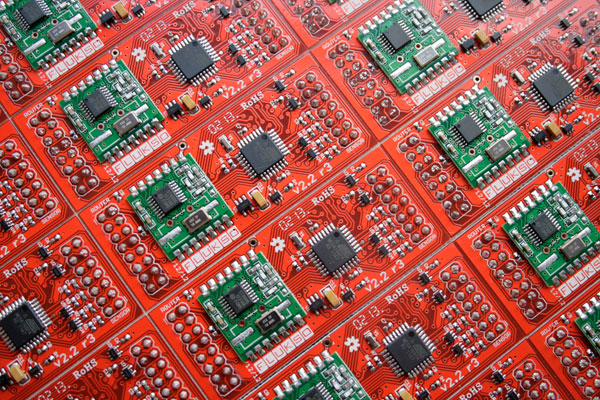

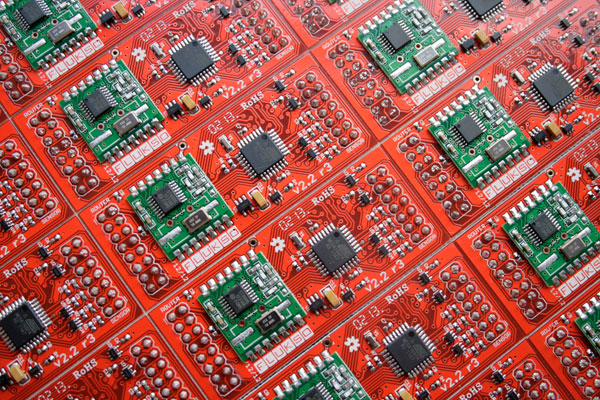

It's always nice when your shipper delivers a much-awaited package on Valentine's day. Mine contained the first production run of the version 2.2 sensor boards. Initial testing is looking good with more probing planned for next week.

It's always nice when your shipper delivers a much-awaited package on Valentine's day. Mine contained the first production run of the version 2.2 sensor boards. Initial testing is looking good with more probing planned for next week.

Flukso HQ will be moving February 1st. You can find the new address in the contact info. Hence no orders will be shipped from now till the start of next week. I'm swallowing the red pill and hoping to be back in Wonderland on Monday!

While the current Fluksometer daemon implementation has proven to be very robust, it was written for a unidirectional data flow. Readings from the sensor board are sourced at the SPI interface, timestamped, processed and sunk in a buffer. This buffer is periodically sent out in an HTTPS call to the Flukso server.

To make the Fluksometer software architecture future-proof, it should provide asynchronous bi-directional communication between internal daemon components as well as external clients. The architecture should allow multiple consumers of incoming data and be modular, extensible and fault-tolerant. We can meet both the async two-way communication and the multiple consumers requirements by introducing a message broker as a central component. Since this broker needs to run on an embedded machine like the Fluksometer, it should be lightweight as well. An attractive candidate is Roger Light's Mosquitto, an open source message broker based on the MQ Telemetry Transport protocol.

A big plus of using Mosquitto is that it comes with libmosquitto, a C client library for talking to an MQTT broker. I wrote a set of Lua bindings to libmosquitto. The bindings allow us to include the MQTT TCP connection into an external event loop, minimizing message latency of the system, compared to an polling-based implementation.

I then wrote a broker performance test based on the lua-mosquitto bindings. The test sets up a process ring: N processes are created by the test and are chained by the broker. M messages will be injected into the ring with a TTL (time-to-live). Once the message has passed TTL times through the broker it will report back to the master process. When all messages are accounted for by the master process, the broker's message throughput will be calculated. Here's an example of a ring test running on my laptop against a Mosquitto broker on the FLM.

1000 messages flow in and out the MQTT broker 1000 times with 100 nodes on the laptop acting as MQTT clients.

Out of curiosity, I ran the test against a Mosquitto server on the laptop. So instead of a 180MHz MIPS 4KEc we've now got a 2GHz Sandy Bridge i7-2630QM hosting Mosquitto. The core count doesn't matter that much as the broker is single-threaded.

So while the FLM took 2000+ seconds to process the 1 million messages, the Intel Core i7 gets the job done in less than 20 seconds, churning through 50k+ messages per second. That's a two orders of magnitude difference. While the 500 msg/sec is still more than adequate for the tasks at hand on the FLM, it's a good reminder that the FLM still is an embedded system.

Flukso HQ is going offline for the next ten days for a well-deserved summer break. We'll be back on August 13.